Six Pillars of Trustworthy AI

Where financial AI fails – the risks and challenges on the path to production

Simon Gregory | CTO & Co-Founder

MPhys Physics, University of Warwick

Request your copy of the Executive Summary / Full Report (PDF)

Foreword

In more than a decade of building financial AI systems in production, I have consistently found myself making the same engineering choice: preserve the information, adapt the system. Not the other way around.

I call this the Information First principle. It is not a methodology I arrived at through theory. It is something I discovered through building. Through the accumulated weight of every decision about what to discard, what to flatten, what to sacrifice to fit the constraints of the system. Every time I chose not to sacrifice it, the system got better. Every time I did, something broke downstream. Eventually the pattern became a principle.

That principle deserves its own treatment, and will receive it. But it is the current that runs beneath everything in this document.

The work that produced it predates the current generation of language models. When I began applying that principle to AI systems for tier-1 clients in production, the gaps became immediate and structural. The architecture, the principles, the failure modes each emerged from building financial AI under real regulatory scrutiny, with real consequences for getting it wrong. When language models arrived, they validated the principles by failing in exactly the ways I had already designed against. These principles were tested in production at tier-1 institutions under conditions designed to challenge them. They held, and survived multiple adversarial evaluations. The Six Pillars are what I found during this process.

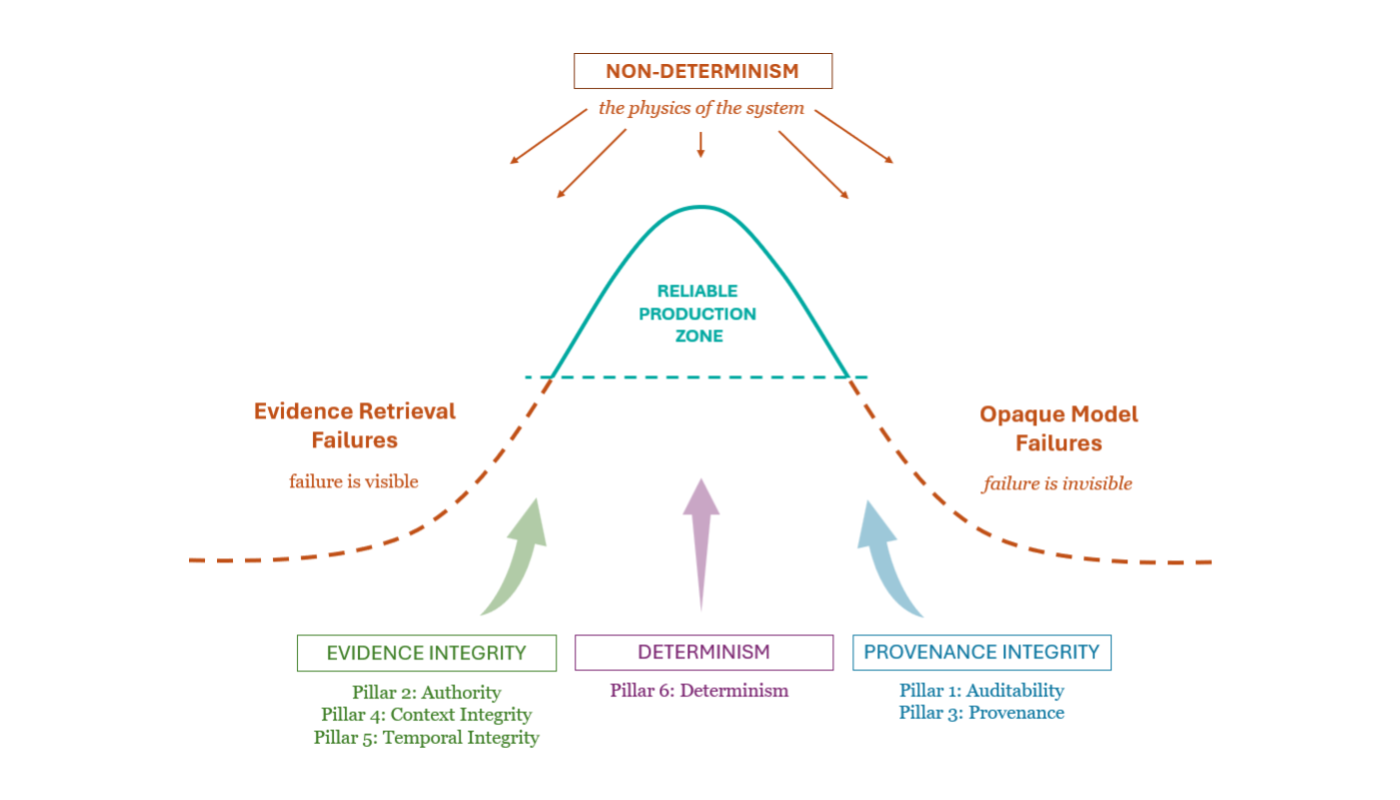

Three Axioms

The Six Pillars are six expressions of three foundational requirements: axioms that any trustworthy financial AI system must satisfy. Violate any one of them and the others cannot hold.

Evidence Integrity

A system must maximise and preserve the integrity of the evidence it operates on.

Provenance Integrity

A system must preserve the lineage of its evidence and verify it architecturally, such that every output surfaces its authoritative sources for human review. Without this, nothing downstream can be trusted.

Determinism

Non‑determinism is a property, not a feature. In finance, it must be contained by deterministic architecture.

What are The Six Pillars?

Each pillar describes a structural failure mode. A way that financial AI systems break in production that cannot be fixed by reaching for a more capable model. The model reasons within an evidential world constructed by the surrounding architecture. Together the pillars form a diagnostic framework: a map of where that evidential world breaks, and why trust collapses with it.

The pillars are ordered deliberately. Auditability establishes the requirement for inspectability. Authority and Provenance define what legitimate evidence looks like and how its lineage must be preserved. Context Integrity and Temporal Integrity describe how that evidence can be corrupted in retrieval. Determinism closes the loop: it is the architectural property that makes all the others hold under pressure.

The framework doesn’t name any products or describe any features. It can serve as an evaluation rubric for any financial AI system, including the ones I’ve built over the years.

Read individually, each pillar identifies a failure. Together they form a containment architecture, the only structural response to the non-determinism that is inherent in these systems. One that exists, and works in production.

Financial AI earns trust only when its reasoning is constrained, inspectable, and replayable.

Outside that boundary, it isn’t really a system, it’s uncontrolled behaviour.

The Six Pillars: Foreword / What are the Six Pillars?

Where financial AI fails – the risks and challenges on the path to production

Pillar 1: Auditability

When you can’t see how an answer was formed, you can’t trust it

Pillar 2: Authority

When AI can’t tell who is allowed to speak, relevance replaces legitimacy

Pillar 3: Provenance

When you can’t see the lineage, the system invents it

Pillar 4: Context Integrity

When the evidential world breaks, the model hallucinates the missing structure

Pillar 5: Temporal Integrity

When time collapses, financial reasoning collapses with it

Pillar 5: Temporal Integrity

When time collapses, financial reasoning collapses with it

The Six Pillars: Conclusion

GenAI is a different kind of system. The only viable response is deterministic architecture.

Next > | Pillar 1: Auditability