Challenges of using Large Language Models with regulated investment research and how to solve them.

Limeglass formed back in 2015 with a mission to develop proprietary Natural Language Processing (NLP) tools to help Research Providers make a much bigger impact with their product. This was only three years after Geoff Hinton’s team made the now-famous Machine Learning breakthrough in the ImageNet 2012 Challenge. The team used a Convolutional Neural Network to achieve attention-grabbing results for Machine Image Recognition. It brought Machine Learning out of relative obscurity and paved the way for a decade of extraordinary technological advances, culminating in today’s hype around ChatGPT and other Large Language Models.

Every manager in every industry is currently wondering how to take advantage of this technological progress, or simply how not to fall behind competitors. As an established player in what is still a very young industry, Limeglass has seen an explosion of enquiries about its services as a result of the recent excitement. The company has already overcome many of the tallest hurdles (which we addressed in a previous article: Don’t try this at home) and is perfectly placed to help provide services in this area to Investment Research providers and consumers.

While certain industries are considering cheap text-automation opportunities using apps like ChatGPT or open-source Large Language Models, Financial Market Research requires a different solution. Not only does most of the content sit behind carefully guarded pay walls, it is also a regulated product which is governed by different regimes in different jurisdictions all over the world. All regulated Research, though, is subject to the requirement that it can demonstrate a “reasonable basis”, making this content unsuitable for the black-box world of generative AI. Instead, any text automation (search, tagging, repackaging etc) must be done in a way that the original copy written by the regulated author is preserved and accessible so that nuance and context are not lost. Limeglass can do this.

Limeglass processes Research from banks and allows them to increase the value of that content by making it more relevant for the bank’s clients on the Buy Side. In this Research Atomisation™ process, Limeglass breaks documents down into individual paragraphs and tags those paragraphs using a comprehensive financial Knowledge Graph (KG) – an industry-largest lexicon of related financial terms.

This effectively means that research documents get turned from semi structured or unstructured text into well-labelled data. Once this has been achieved, significant value can be extracted from the Sell Side product by making those documents searchable, targeting them to the clients that need them, and creating product that is more tailored to those clients.

Most of the Sell Side’s content goals, therefore, are unsuited to the risky black box magic of Large Language Models. If you assume that the highly-trained Sell Side analyst has done a good job of writing research in the first place, you should also assume that it would be better to find the relevant paragraph(s) from that original document, rather than resorting to an AI summary, given the risks of “hallucinations” and of missing human nuance.

For some carefully considered use cases, however, a few clients are exploring the potentially powerful combination of Limeglass’s reliable, well-labelled dataset in conjunction with generative AI. Limeglass can provide a targeted subset of tagged paragraphs to a Large Language Model, rather than an enormous block of unlabelled, unstructured text. This can give the model a crucial steer in the correct direction before the black box processing begins. Think of this as “summarising atomised content”.

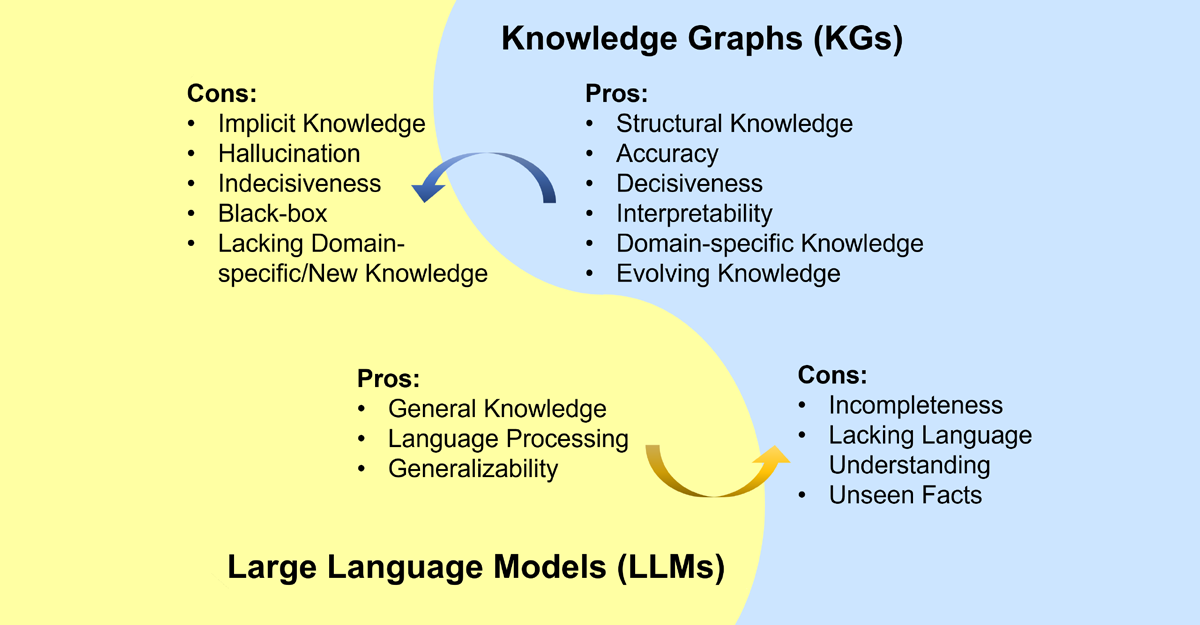

Researchers from Griffith University and Monash University (both in Australia) published an Academic paper looking into the future of LLMs and Knowledge Graphs (KGs). They conclude that “it is complementary to unify LLMs and KGs together and simultaneously leverage their advantages” given the relative strengths and weaknesses of both, summarised here:

Source: Journal of latex class files, vol. 14, no. 8, August 2021

Part of the Limeglass tech stack is its industry-leading lexicon of >160,000 Financial Terms, organised meticulously into a smart ontology. Or, put another way, it is a gigantic, ever-growing Knowledge Graph.

So, what might a Limeglass Knowledge Graph and Large Language Model collaboration look like?

Naturally, there are many exciting ways this could be done, but one example could be a human + machine workflow to summarise high volumes of complex content (only where permitted by Regulators, of course).

Imagine a team of Sales people on a trading floor writing their daily emails to clients in the early hours of the day. There is never enough time to read all the Research reports published overnight. But wouldn’t it be great if they had a streamlined way to assess which pieces of Research they would choose to read (identified using Limeglass tags), and if they were provided with draft summaries of that content (using Generative AI) alongside the original?

Using those two technologies together, the Sales team can make quick, informed decisions that will provide a richer content experience to their clients without passing risky levels of responsibility for relevance and accuracy to a machine. It is much easier for people to read two pieces of content (the original and the AI summary) and review the accuracy of the latter than it is to read the original and come up with their own manually written summary. Providing the content this way also creates a natural audit trail should any summaries need to be reviewed at a later date.

So if you are a producer or consumer of Investment Research content and you are looking to benefit from the rapid technological progress across the broad realm of Natural Language Processing, don’t simply pass all your content to a black box and hope for the best. Come to the experts instead.

Oliver Hunt

Head of Client Solutions